Synthesizing what my Pulsatile Tinnitus sounds like

In February 2022, I began to hear my heartbeat pulsing in my right ear.

I was sitting at the same desk where I’m typing this post today. At first, I thought heard something faintly brushing in the room behind me, barely at the edge of perception.

Here’s what it sounded like: (🎧 headphones recommended!)

Later that night, I put in earplugs and noted that the sound was still there. After curious googling, I learned this is called Pulsatile Tinnitus, and found the delightfully named whooshers.com.

Many months later, I met an amazing team who specialize in Pulsatile Tinnitus at UCSF, where I was diagnosed with a likely DAVF. If you look closely, you might be able to spot it in these holographic explorations of my MRI scans. I’m very lucky that these sounds have been my only symptom, and I’m scheduled for an operation which could fix the underlying cause.

Since my situation is relatively rare, I wanted to take this opportunity to preserve my qualitative experience of these phantom sounds before they’re (hopefully!) gone. I hope this can provide a data point for the kinds of sounds one can experience.

What does my Pulsatile Tinnitus sound like?

There’s a broad variety of descriptions I’ve read online, ranging from hissing, gurgling, beeping, whooshing, to whining and whistling. What does a whistle sound like in this context? It’s all very subjective, so having audio to listen to makes a huge difference. Some folks have been able to create recordings of their Pulsatile Tinnitus sounds. Mine were not detectable via stethoscope.

I wanted to have my own basis for expessing what I’m hearing, because it’s… really quite odd.

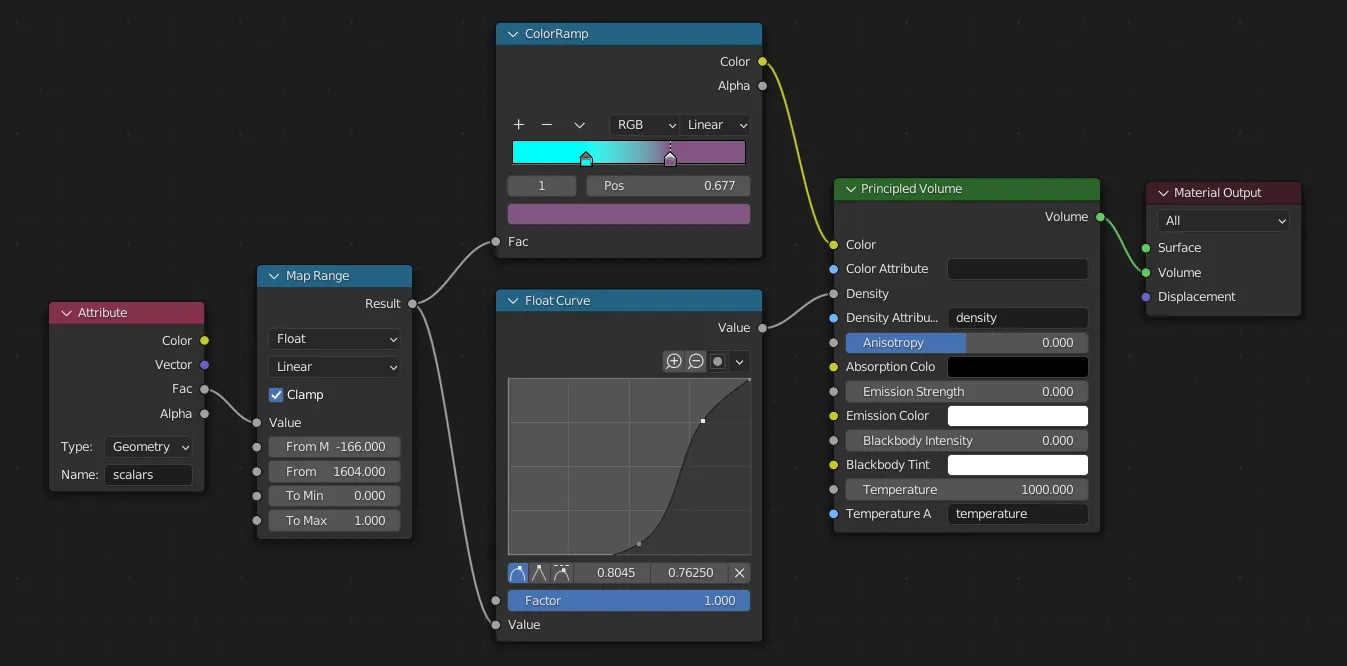

What follows is an attempt to portray my subjective experience of Pulsatile Tinnitus using Bespoke, a marvelous synthesizer created by Ryan Challinor. Bespoke’s node-based approach to synths is perfect for exploratory sound design. Coincidentally, I first learned of Bespoke while taking part in Synthruary, mere days before my onset.

Playing an unseen instrument

What really got my attention was when I was lying in bed, and I began to hear a whining sound:

I KNOW. Wild right?

Thankfully, it’s not very loud. Most background noise can drown it out. If you’re wearing headphones, adjust the volume so that the bassy whooshing noise is barely audible.

Getting this going in Bespoke for the first time was quite an emotional moment for me. After nearly 8 months hearing such a weird, ineffable phenomenon, I finally had something I could point to and say: this is it!

I’d say this is about 90% similar to what I experience. It can be tricky to pin down all of the details, because I’m working from memory, but: qualitatively, this is very close. Initially, I was steeling myself for the frustration of spending hours fumbling around not hitting the mark, but I was shocked how quickly this came together. It’s kinda interesting how everything can be reflected by such elemental synths: just 2 shaped noise generators and an oscillator.

Variations on whooshes

In practice, what I hear has many modalities. The core sound is always a whoosh, and it presents in a few ways.

Often, the whoosh is longer (less staccato). This one I could actually effectively A/B because it’s what I was hearing at the time I was tweaking the synth. At the correct volume it’s very close.

Another common motif is a whoosh with descending pitch:

Notice how you can kind of hear the whistling in there? I think that this is what becomes the louder, more resonant whistle. Occasionally I hear it abruptly change from a whistle to just whooshes, typically with one or two clicks when it happens.

Oscillations

Here’s a few variations on the whistles and whines. Sometimes the whoosh is more fuzzy and bubbly. The pitch range of the whine seems to vary quite a bit too, for instance, lower:

It can also be higher pitched. One thing I tried to represent here is how the pitch of the whine can be wobbly (courtesy of the pink “unstablepitch” node Bespoke provides):

Perhaps the biggest flaw in these examples is they don’t capture how dynamic the sound is. It doesn’t remain exactly in one pitch or timbre for as long as these samples. I did include some organic variation in them (e.g. by varying the tempo / my “heart rate”), but in practice, this is a weird, varying, chaotic system. I’ve thought about building a bigger synth which transitions between these sounds, but feel that would veer further into the realm of artistic interpretation, since it’ll be harder for me to compare with the real thing.

Bespoke save files

Here are the synth files, which can be opened in Bespoke.

In the order which they appear above:

initial.bsk,

whistle.bsk,

current.bsk,

descending.bsk,

whine.bsk,

whine-high.bsk

Like most other content on this blog, these synths are licensed Creative Commons BY-SA 4.0.